Error and Warning Messages

This is the list of all error and warning messages which TIM can return. Apart from these, there are also error messages auto-generated by JSON schema validator (like the one in the example above). Note that the string ### in the actual message is replaced with dynamic content (e.g. predictor name, timestamp, ...).

Model building and forecasting error messages

- Target variable is different in the data than in the model

- Model uses a different holiday variable than the data.

- Model uses a different sampling period than the dataset.

- No training data provided.

- PredictionTo or predictionFrom time unit is shorter than the sampling rate.

- PredictionTo or predictionFrom time unit of data sampled in months can only be given in samples (S).

- PredictionTo or predictionFrom time unit can not be given in months if data sampled differently than in months.

- You have chosen to forecast no timestamps. Check your predictionTo and predictionFrom.

Job execution error messages

- Tabular result could not be saved to database.

- Model could not be saved to database.

- Root cause analysis could not be saved to database.

- Error measures could not be saved to database.

- Worker version could not be saved to database.

- Job info could not be saved to database.

- Getting job parameters for job ### failed.

- Getting model for job ### failed.

- Getting job ### failed.

- Getting job data failed.

- Getting dataset id failed.

- Getting latest version of dataset ### failed.

- Getting metadata of dataset version ### of dataset ### failed.

- Getting slice from dataset version ### of dataset ### failed.

- Getting license metadata failed.

- Parsing model failed.

- Verifying model signature failed. The model was modified.

- Internal error, please contact support (support@tangent.works).

- We can't process your request at the moment. Please contact support@tangent.works.

Model building and forecasting warning messages

- Could not evaluate some production forecast. There might be a gap in some of the predictors in its most recent records. Try changing the imputationLength.

- Predictor ###1 has an outlier value that is ###2% times range (max-min) higher than the in-sample maximum.

- Predictor ###1 has an outlier value that is ###2% times range (max-min) lower than the in-sample minimum.

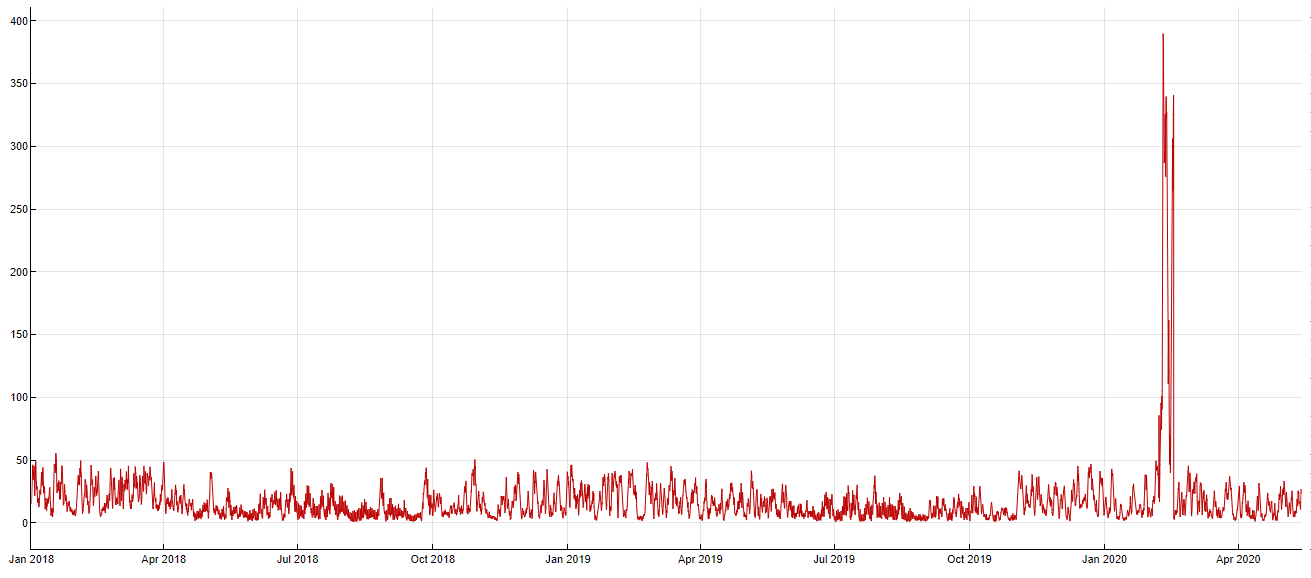

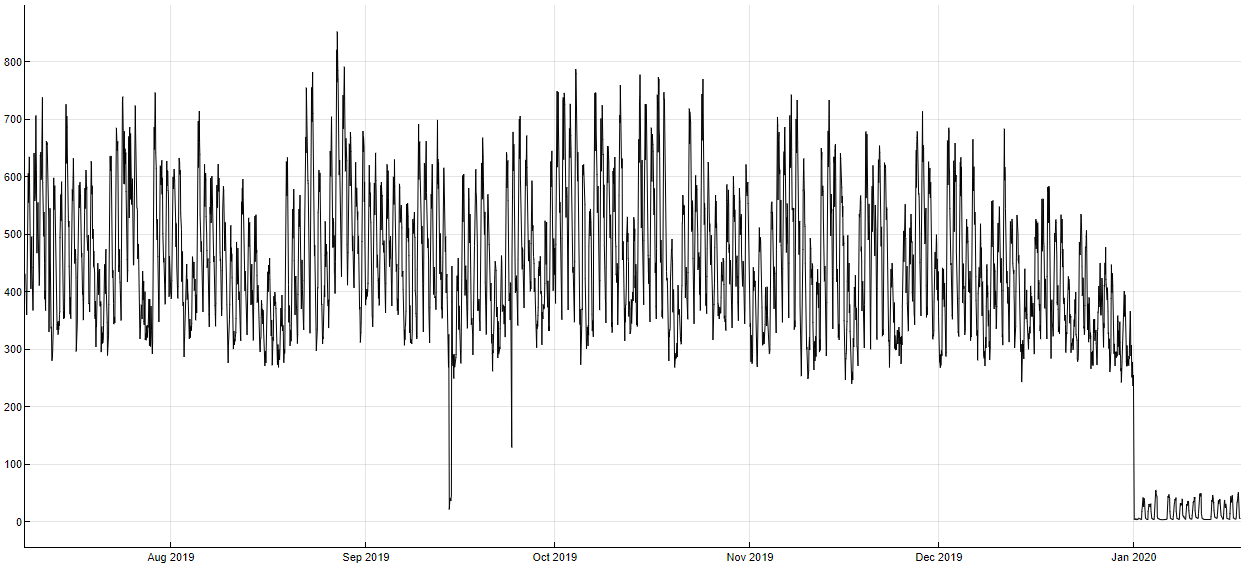

- Predictor ### contains an outlier or a structural change in its most recent records. Sometimes the data used for model building and prediction are faulty but the simple comparison of minimum and maximum values is not enough to detect it (see below). That is why we perform other checks like moving variance check for the last 10 percent of your dataset to make sure that the nature of the data is not significantly different for its most recent records preceding the prediction you would like to make. This will not cause any changes to the model building / prediction process itself. It is only informative.

- Not returning in-sample forecasts, the response would be too big.

- Predictor ### has too many missing values and will be discarded.

- Your backtesting length is bigger than 90 percent of the training data. Models and predictions might be inaccurate or empty.

- Your dataset exceeds the memory limit by ###1%. Dropping ###2% of oldest observations. If you wish to keep all observations, try setting memoryPreprocessing to false. If you override this setting, it might make the worker run of the memory and the process will fail. However, it does not have to be the case - especially if many of your predictors do not have a high predictive power.

- Your rolling window is not divisible by 1 day, but your dataset and Model Zoo have daily cycle. Some backtest forecasts might not be evaluated.

- Target not provided, no new model will be trained.